Unifying Theories of Psychedelic Drug Effects

by Link Swanson

How do psychedelic drugs produce their characteristic range of acute effects in perception, emotion, cognition, and sense of self? How do these effects relate to the clinical efficacy of psychedelic-assisted therapies? Efforts to understand psychedelic phenomena date back more than a century in Western science. In this article I review theories of psychedelic drug effects and highlight key concepts which have endured over the last 125 years of psychedelic science. First, I describe the subjective phenomenology of acute psychedelic effects using the best available data. Next, I review late 19th-century and early 20th-century theories, model psychoses theory, filtration theory, and psychoanalytic theory, and highlight their shared features. I then briefly review recent findings on the neuropharmacology and neurophysiology of psychedelic drugs in humans. Finally, I describe recent theories of psychedelic drug effects which leverage 21st-century cognitive neuroscience frameworks, entropic brain theory, integrated information theory, and predictive processing, and point out key shared features that link back to earlier theories. I identify an abstract principle which cuts across many theories past and present: psychedelic drugs perturb universal brain processes that normally serve to constrain neural systems central to perception, emotion, cognition, and sense of self. I conclude that making an explicit effort to investigate the principles and mechanisms of psychedelic drug effects is a uniquely powerful way to iteratively develop and test unifying theories of brain function.

Introduction

Lysergic acid diethylamide (LSD), N,N-dimethyltryptamine (DMT), psilocybin, and mescaline, the

classic psychedelic drugs, can produce a broad range of effects in perception, emotion, cognition, and sense of self. How do they do this? Western science began its

first wave of systematic investigations into the unique effects of mescaline 125 years ago. By the 1950s, rising interest in mescaline research was expanded to include drugs like DMT, LSD, and psilocybin in a second wave of psychedelic science. Because of their dramatic effect on the character and contents of subjective awareness, psychedelic drugs magnified the gaps in our scientific understanding of how brain chemistry relates to subjective experience. Huxley commented that our understanding circa 1954 was

absurdly inadequate and amounted to a mere clue that he hoped would soon develop into a more robust understanding.

Meanwhile the clue is being systematically followed; the sleuths, biochemists, psychiatrists, psychologists, are on the trail. A third wave of psychedelic science has recently emerged with its own set of sleuths on the trail, sleuths who now wield an arsenal of 21st-century scientific methodologies and are uncovering new sets of clues.

Existing theoretical hurdles span five major gaps in understanding. The first gap is that we do not have an account of how psychedelic drugs can produce such a broad diversity of subjective effects. LSD, for example, can produce subtle intensifications in perception, or it can completely dissolve all sense of space, time, and self. What accounts for this atypical diversity?

The second gap is that we do not understand how pharmacological interactions at neuronal receptors and resulting physiological changes in the neuron lead to large-scale changes in the activity of neural populations, or changes in brain network connectivity, or at the systems-level of global brain dynamics. What are the causal links in the multi-level pharmaco-neurophysiological chain?

The third gap is that we do not know how psychedelic drug-induced changes in brain activity, at any level of description. map onto the acute subjective phenomenological changes in perception, emotion, cognition, and sense of self. This kind of question is not unique to psychedelic drugs but our current understanding of psychedelic drug effects clearly magnifies the disconnect between brain science and subjective experience.

Fourth, there is a gap in our understanding of the relationships between psychedelic effects and symptoms of psychoses, such as perceptual distortion, hallucination, or altered self-reference. What is the relationship between psychedelic effects and symptoms of chronic psychotic disorders?

Fifth and finally, there is a gap in our clinical understanding of the process by which psychedelic-assisted therapies improve mental health. Which psychedelic drug effects (in the brain or in subjective experience) enable clinical improvement? How?

Scientific efforts to understand diverse natural phenomena aim to produce a single theory that can account for many phenomena using a minimal set of principles. Such theories are sometimes called unifying theories. Not everyone agrees on the meaning of

unification or

unifying theory in science. Morrison observed that, although theory unification is a messy process which may not have discernible universal characteristics, historically successful unifying scientific theories tend to have two common features: (1) a formalized framework (quantitative mathematical descriptions of the phenomena) and (2) unifying principles (abstract concepts that unite diverse phenomena). On this conception, then, a unifying theory of psychedelic drug effects would offer a single formalized (mathematical or computational) framework capable of describing diverse psychedelic phenomena using a minimal set of unifying principles. Unfortunately, the survey of literature in this review does not locate an existing unifying theory of psychedelic drug effects. It does, however, highlight enduring abstract principles that recur across more than a century of theoretical efforts. Furthermore, it reviews recent formalized frameworks which, although currently heterogeneous and divergent, hint at the possibility of a quantitative groundwork for a future unifying theory.

The field of cognitive neuroscience offers formalized frameworks and general principles designed to track and model the neural correlates of perception, emotion, cognition, and consciousness. These broad frameworks span major levels of description in the brain and attempt to map them onto behavioral and phenomenological data. Corlett et al. argue that until this is done

our understanding of how the pharmacology links to the symptoms will remain incomplete. Montague et al. argue that

computational psychiatry can remedy the

lack of appropriate intermediate levels of description that bind ideas articulated at the molecular level to those expressed at the level of descriptive clinical entities. Seth argues that computational and theoretical approaches can facilitate a transition from correlation to explanation in consciousness science, and explains how a recent LSD, psilocybin, and ketamine study was motivated by a need to elucidate descriptions at intermediate levels somewhere between pharmacology and phenomenology: We know there is a pharmacological link, we know there is a change in experience, and we know there is a clinical impact. But the middle bit... what are these drugs doing to the global activity of the brain..., that is the gap we are trying to fill with this study. Taken together, the above quotations point to an emerging sense that cognitive neuroscience frameworks can address gaps in our understanding of psychedelic drug effects.

In this article I review theories of psychedelic drug effects. First, making an effort to clearly define the target explananda, I review the acute subjective phenomenological properties of psychedelic effects as well as long-term clinical outcomes from psychedelic-assisted therapies. Second, I review theories from first-wave and second-wave psychedelic science, model psychoses theory, filtration theory, and psychoanalytic theory, and identify core features of these theories. Third, I review findings from recent neurophysiological research in humans under psychedelic drugs. Finally, I review select 21st-century theories of psychedelic effects that have been developed within cognitive neuroscience frameworks; namely, entropic brain theory, integrated information theory, and predictive processing. My analysis of recent theoretical efforts highlights certain features, first conceptualized in 19th- and 20th-century theories, which remain relevant in their ability to capture both the phenomenological and neurophysiological dynamics of psychedelic effects. I describe how these enduring theoretical features are now being operationalized into formalized frameworks and could serve as potential unifying principles for describing diverse psychedelic phenomena.

Psychedelic Drug Effects

There are dozens of molecules known to cause psychedelic-like effects. This review focuses only on a limited set of drugs dubbed

classical hallucinogens or

classic psychedelics which are: LSD, DMT, psilocybin, and mescaline. Importantly, there are qualitative inter-drug differences between the effects of the four classic psychedelic drugs. Drug dosage is a primary factor in predicting the types of effects that will occur. Effects unfold temporally over a drug session; onset effects are distinct from peak effects and some effects have a higher probability of occurring at specific timepoints over the total duration of drug effects. Furthermore, effects are influenced by non-drug factors traditionally referred to as set and setting, such as personality, pre-dose mood, drug session environment, and external stimuli, Figure 1.

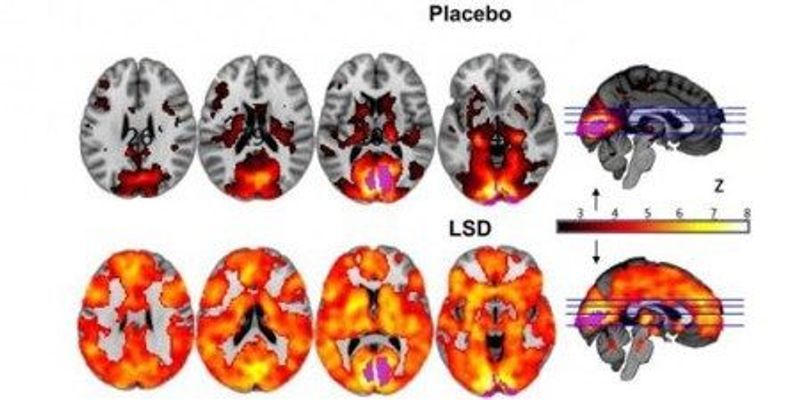

FIGURE 1

‘Extra-pharmacological’ factors that can determine psychedelic drug effects. “

Trait factors may be biological [e.g., receptor polymorphisms or psychological in nature [e.g., personality or suggestibility. The

pre-state refers to such things as anticipatory anxiety, expectations and assumptions (which account for so-called ‘placebo’ and ‘nocebo’ effects), and readiness to surrender resistances and ‘let go’ to the drug effects. In the context of psychedelic research, the pre-state is traditionally referred to as the ‘set’.

State refers to the acute subjective and biological quality of the drug experience and may be measured via subjective rating scales or brain imaging.

Dose relates to the drug dosage—which may be a critical determinant of state—as well as long-term outcomes.

Environment relates to the various environmental influences. In the context of psychedelic research this is traditionally referred to as ‘setting’. We recognize that the environment can be influential at all stages of the process of change associated with drug action. The

long-term outcomes may include such things as symptoms of a specific psychiatric condition such as depression—measured using a standard rating scale as well as relatively pathology-independent factors such as personality and outlook”.

The above variables, while crucial, do not completely prohibit meaningful characterization of general psychedelic effects, as numerous regularities, patterns, and structure can still be identified. Indeed, common psychedelic effects can be reliably measured using validated psychometric instruments consisting of self-report questionnaires and rating scales though some of these rating scales may be in need of further validation using modern statistical techniques. Items from these rating scales are wrapped in

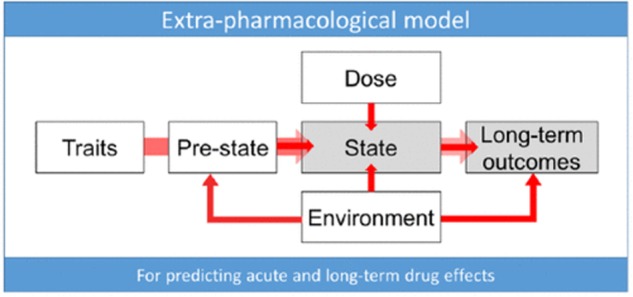

scare quotes in the following discussion in an effort to characterize the subjective phenomenology of psychedelic effects from a first-person perspective. An example of rating scale results is given in Figure 2.

FIGURE 2

Subjective rating scale items selected after psilocybin (blue) and placebo (red). “Items were completed using a visual analog scale format, with a bottom anchor of ‘no, not more than usually’ and a top anchor of ‘yes, much more than usually’ for every item, with the exception of ‘I felt entirely normal,’ which had bottom and top anchors of ‘No, I experienced a different state altogether’ and ‘Yes, I felt just as I normally do,’ respectively. Shown are the mean ratings for 15 participants plus the positive SEMs. All items marked with an asterisk were scored significantly higher after psilocybin than placebo infusion at a Bonferroni-corrected significance level of

p < 0.0022.

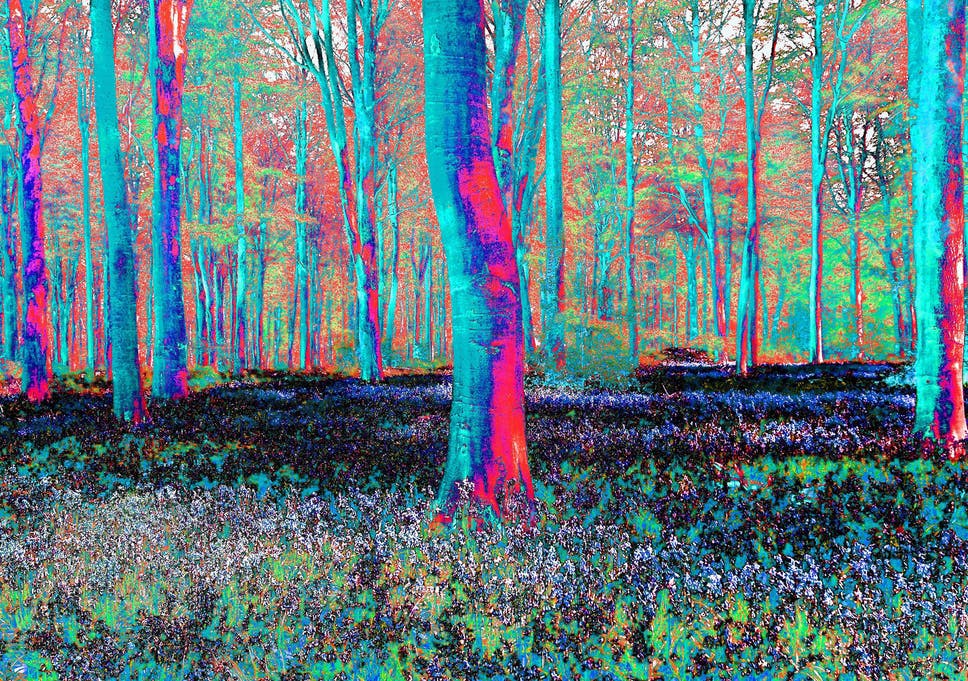

Perceptual Effects

Perceptual effects occur along a dose-dependent range from subtle to drastic. The range of different perceptual effects includes perceptual intensification, distortion, illusion, mental imagery, elementary hallucination, and complex hallucination. Intensifications of color saturation, texture definition, contours, light intensity, sound intensity, timbre variation, and other perceptual characteristics are common. The external world is experienced as if in higher resolution, seemingly more crisp and detailed, often accompanied by a distinct sense of

clarity or

freshness in the environment. Sense of meaning in percepts is altered, e.g.

Things around me had a new strange meaning for me, or,

Objects around me engaged me emotionally much more than usual.

Perceptual distortions and illusions are extremely common, e.g.

Things looked strange or

My sense of size and space was distorted, or,

Edges appeared warped or

I saw movement in things that were not actually moving. Textures undulate in rhythmic movements, object boundaries warp and pulsate, and the apparent sizes and shapes of objects can shift rapidly. Controlled psychophysical studies have measured various alterations in motion perception, object completion, and binocular rivalry.

In what are known as elementary hallucinations, e.g.

I saw geometric patterns, the visual field can become permeated with intricate tapestries of brightly colored, flowing latticework and other geometric visuospatial

form constants. In complex hallucinations visual scenes can present elaborate structural motifs, landscapes, cities, galaxies, plants, animals, and human (and non-human) beings. Complex hallucinations typically succeed elementary hallucinations and are more likely at higher doses, especially under DMT. Both elementary and complex hallucinations are more commonly reported behind closed eyelids (

closed eye visuals, or CEVs) but can dose-dependently occur in full light with eyes open (

open eye visuals; OEVs). CEVs are often described as vivid mental imagery. Under psychedelic drugs, mental imagery becomes augmented and intensified, e.g.

My imagination was extremely vivid, and is intimately linked with emotional and cognitive effects. Sometimes sensible film-like scenes appear, but very often the visions consist of scenes quite indescribable in ordinary language, and bearing a close resemblance to the paintings and sculptures of the surrealistic school. Psychedelic mental imagery can be modulated by both verbal and musical auditory stimuli. Synaesthesia has been reported, especially visual phenomena driven by auditory stimuli, e.g.

Sounds influenced the things I saw, but classification of these effects as true synaesthesia is actively debated.

Somatosensory perception can be drastically altered, e.g.

I felt unusual bodily sensations including body image, size, shape, and location. Sense of time and causal sequence can lose their usual linear cause-effect structure making it difficult to track the transitions between moments.

Overall the perceptual effects of psychedelics are extremely varied, multimodal, and easily modulated by external stimuli. Perceptual effects are tightly linked with emotional and cognitive effects.

Emotional Effects

Emotional psychedelic effects are characterized by a general intensification of feelings, increased (conscious) access to emotions, and a broadening in the overall range of emotions felt over the duration of the drug session. Psychedelics can induce unique states of euphoria characterized by involuntary grinning, uncontrollable laughter, silliness, giddiness, playfulness, and exuberance. Negatively experience emotions, e.g.

I felt afraid or

I felt suspicious and paranoid, are often accompanied by a general sense of losing control, e.g.

I feared losing control of my mind. However, the majority of emotional psychedelic effects in supportive contexts are experienced as positive. Both LSD and psilocybin can bias emotion toward positive responses to social and environmental stimuli. Spontaneous feelings of awe, wonder, bliss, joy, fun, excitement (and yes, peace and love) are also consistent themes across experimental and anecdotal reports. In supportive environments, classic psychedelic drugs can promote feelings of trust, empathy, bonding, closeness, tenderness, forgiveness, acceptance, and connectedness. Emotional effects can be modulated by all types of external stimuli, especially music.

Cognitive Effects

Precise characterization of cognitive psychedelic effects has proven enigmatic and paradoxical. Acute changes in the normal flow of linear thinking, e.g.

My thinking was muddled or

My thoughts wandered freely, are extremely common. This is reflected in reduced performance on standardized measures of working memory and directed attention; however, reductions in performance have been shown to occur less often in individuals with extensive past experience with the drugs effects. Crucially, cognitive impairments related to acute psychedelic effects are dose-dependent. Extremely low doses, known as microdoses, have been anecdotally associated with improvements in cognitive performance, a claim that urgently requires empirical verification through controlled research. Theoretical attempts to account for the reported effects of microdosing have yet to emerge in the literature

and therefore present an important opportunity to future theoretical endeavors.

Certain cognitive traits associated with creativity can increase under psychedelics such as divergent thinking use of unlikely language patterns or word associations, expansion of semantic activation, and attribution of meaning to perceptual stimuli, especially musical stimuli. Primary-process thinking, a widely validated psychological construct associated with creativity, is characterized phenomenologically by

image fusion; unlikely combinations or events; sudden shifts or transformations of images; and contradictory or illogical actions, feelings, or thoughts. Psilocybin and LSD have been shown to increase primary-process thinking as well as the subjective bizarreness and dreamlike nature of mental imagery associated with verbal stimuli. Cognitive flexibility (or

loosening of cognition) and optimism can remain for up to 2 weeks after the main acute drug effects have dissipated. Furthermore, long-term increases in creative problem-solving ability and personality trait openness have been measured after just one psychedelic experience.

Ego Effects and Ego Dissolution Experiences

Kluever observed that under peyote

the line of demarcation drawn between object and subject in normal state seemed to be changed. The body, the ego, became objective in a certain way, and the objects became subjective. Similar observations continued throughout first-wave and second-wave psychedelic science. Importantly, effects on sense of self and ego occur along a dose-dependent range spanning from subtle to drastic. Subtle effects are described as a softening of ego with increased insight into ones own habitual patterns of thought, behavior, personal problems, and past experiences; effects which were utilized in psycholytic psychotherapy. Drastic ego-effects, known as ego dissolution, are described as the dissolution of the sense of self and the loss of boundaries between self and world, e.g.

I felt like I was merging with my surroundings, or

All notion of self and identity dissolved away, or

I lost all sense of ego or

I experienced a loss of separation from my environment, or

I felt at one with the universe. These descriptions resemble non-drug mystical-type experiences; however, the extent of overlap here remains an open question. Ego dissolution is more likely to occur at higher doses. Furthermore, certain psychedelic drugs cause ego dissolution experience more reliably than others; psilocybin, for example, was found to produce full ego dissolution more reliably compared with LSD. Ego dissolution experiences can be driven and modulated by external stimuli, most notably music. Interestingly, subjects who experienced complete ego dissolution in psychedelic-assisted therapy were more likely to evidence positive clinical outcomes as well as long-term changes in life outlook and the personality trait openness.

Clinical Efficacy and Long-Term Effects

Mescaline-assisted therapies showed promising results during first-wave psychedelic science, and this trend continued through second-wave psychedelic research on LSD-assisted therapies. Recent studies have produced significant evidence for the therapeutic utility of psychedelic drugs in treating a wide range of mental health issues, including anxiety and depression, obsessive-compulsive disorder, and addiction to alcohol and tobacco. In many clinical studies, ego-dissolution experience has correlated with positive clinical outcomes.

Remarkably, as mentioned above, a single psychedelic experience can increase optimism for at least 2 weeks after the session and can produce lasting changes in personality trait openness. A study of regular (weekly) ayahuasca users showed improved cognitive functioning and increased positive personality traits compared with matched controls. Interestingly, these outcomes may expand beyond sanctioned clinical use, as illicit users of classic psychedelic drugs within the general population self-report positive long-term benefits from their psychedelic experiences, are statistically less likely to evidence psychological distress and suicidality, and show an overall lower occurrence of mental health problems in general.

Summary

The above evidence demonstrates the broad diversity of acute subjective effects that classic psychedelic drugs can produce in perceptual, emotional, and cognitive domains. Unique changes in sense of self, ego, body image, and personal meaning are particularly salient themes. How do these molecules produce such dramatic effects? What are the relationships between acute perceptual, emotional, cognitive, and self-related effects? What is the link between acute effects and long-term changes in mental health, personality, and behavior? Theories addressing these questions emerged as soon as Western science recognized the need for a scientific understanding of psychedelic drug effects beginning in the late 19th century.

19th and 20th Century Theories of Psychedelic Drug Effects

The effects described above are what captured the interest of first-wave and second-wave psychedelic scientists, and the theories they developed in their investigations have two central themes. The first theme is the observation that psychedelic effects share descriptive elements with symptoms of psychoses, such as hallucination, altered self-reference, and perceptual distortions. This theme forms the basis of model psychoses theory and is what motivated the adoption of the term psychotomimetic drugs. The second theme is the observation that psychedelic drugs seem to expand the total range of contents presented subjectively in our perceptual, emotional, cognitive, and self-referential experience. This theme forms the basis of filtration theory and is what motivated the adoption of the term

psychedelic drugs. A third theoretical account uses psychoanalytic theory to address the expanded range of mental phenomena produced by psychedelic drugs as well as the shared descriptive elements with symptoms of psychoses. The next section reviews these themes along with their historically associated theories before tracing their evolution into third-wave (21st-century) psychedelic science.

Model Psychoses Theory

When Lewin

discovered the peyote cactus, his reports caught the attention of adventurous 19th-century scientists like Prentiss and Morgan, Mitchell, and Ellis, who promptly obtained samples and began consuming the cactus and observing its effects on themselves. When Heffter isolated mescaline from the peyote cactus and Spaeth paved the way for laboratory synthesis, scientists began systematically dosing themselves with mescaline and publishing their findings in medical journals. Kluever, intrigued by the approach of Knauer and Maloney, ingested peyote at the University of Minnesota Psychological Laboratory and, after the effects had taken hold, completed standard psychophysical measures. Kluever argued that systematic investigations into the neural mechanisms of mescaline effects would help neurology

elucidate more general questions of the psychology and pathology of perception. However, it was the pathology aspect, not the general psychology questions, which became the dominant focus of ensuing mescaline research paradigms.

Model psychoses theory began long before any of the classic psychedelic drugs became known to Western science. Moreau (1845) linked hashish effects with mental illness, and Kraepelin founded pharmacopsychology by dosing himself and his students with various psychoactive drugs in the laboratory of Wilhelm Wundt. These scientists hoped to study psychotic symptoms using drugs to induce

model psychoses (1) in themselves, to gain first-person knowledge of the phenomenology of psychotic symptoms by

administering to one another such substances as will produce in us transitory psychoses, and (2) in normal research subjects, allowing for laboratory behavioral observations on how the symptoms emerge and dissipate. Kraepelin and colleagues attempted to model psychoses using many drugs, e.g.

tea, alcohol, morphine, trional, bromide, and other drugs, yet Kraepelins pupils Knauer and Maloney argued that these drugs

unfortunately produce mental states which have little similarities to actual insanities and argued instead that mescaline was unique in its ability to truly model psychoses. The dramatic subjective effects of mescaline invigorated the model psychoses paradigm. Growing demand for the ideal chemical agent for model psychoses eventually motivated Sandoz Pharmaceuticals to bring LSD to market in the 1940s.

Importantly, model psychoses theory was not initially a theory of drug effects; it was an idealistic paradigm for researching psychoses that was already in use before Western science

discovered classic psychedelic drugs. Nonetheless, it seeded the idea that psychedelic effects themselves could be explained in terms of psychopathology and motivated a search for common neural correlates. The founding figures of neuropharmacology were driven by questions regarding the relationship between psychoactive drugs and endogenous neurochemicals. The putative psychoses-mimicking effects of LSD and mescaline inspired the idea that psychotic symptoms might be caused by a

hypothetical endotoxin or some yet-unknown endogenous neurochemical gone out of balance. The discovery that LSD can antagonize serotonin led to the hypothesis that the effects of LSD are serotonergic and simultaneously to the historic hypothesis6 that serotonin might play a role in regulating mental function.

At the 1955 Second Conference on Neuropharmacology, the whole class of drugs was dubbed psychotomimetic. Interestingly, the word mimetic means to imitate, mimic, or exhibit mimicry, which is the act of appearing as something else, for example, when one species mimics the appearance or behavior of another. Psychotomimetic drug effects, on this literal reading of the term, would merely mimic or imitate, appear as if they are, psychoses. However, to mimic is not to model. A model intends to capture important structural or functional principles of the entity or phenomena that it models. A mimic, by contrast, merely creates the illusion that it possesses the properties it mimics. Thus, the term psychotomimetic implies that the effects of these drugs merely resemble psychoses but do not share functional or structural properties in their underlying biology or phenomenology. Nonetheless, LSD and mescaline were used as models to investigate psychotic symptoms. Yet the scientific utility of drug models hinges on our understanding of the mechanisms underpinning the drugs effects; we still need a theory of how psychotomimetic drugs work. A subtle explanation, explananda circularity can come into play here, in which psychoses are explained using drug models yet the drug effects are explained using theories of psychoses. Further complicating the matter is the clear difference between acutely induced drug effects and the gradual development of a chronic mental illness. This cluster of conceptual challenges poured fuel on the flaming debates about the merits of drug-induced model psychoses, which in 1957 had already

smoldered for nearly 50 years. An additional conceptual challenge was the fact that mescaline had for years shown promise in treating psychopathologies, and LSD was gaining popularity for pharmaceutically enhanced psychotherapy. Model psychoses theory needed to explain how it was the case that drugs putatively capable of inducing psychotic symptoms could simultaneously be capable of treating them, what Osmond termed,

the hair of the dog problem. In fact, to this day,

the apparent paradox by which the same compound can be both a model of, and yet a treatment for, psychopathology has never been properly addressed. Taken together, the above cluster of conceptual challenges drove Osmond to doubt his own prior work on model psychoses, and he declared

psychotomimetic an outmoded term, arguing that the effects of these drugs could not be captured wholly in terms of psychopathology.

If mimicking mental illness were the main characteristic of these agents, psychotomimetics would indeed be a suitable generic term. It is true that they do so, but they do much more.

Filtration Theory

Osmond argued that the psychotomimetic class of drugs needed a more appropriate name.

My choice, because it is clear, euphonious, and uncontaminated by other associations, is psychedelic, mind-manifesting. But how exactly should we understand psychedelic effects as mind-manifesting? Osmonds nomenclature legacy was directly influenced by his friend Aldous Huxley, who described the core idea to Osmond in the following personal letter dated April 10, 1953:

Dear Dr. Osmond,

It looks as though the most satisfactory working hypothesis about the human mind must follow, to some extent, the Bergsonian model, in which the brain with its associated normal self, acts as a utilitarian device for limiting, and making selections from, the enormous possible world of consciousness, and for canalizing experience into biologically profitable channels. Disease, mescaline, emotional shock, aesthetic experience and mystical enlightenment have the power, each in its different way and in varying degrees, to inhibit the function of the normal self and its ordinary brain activity, thus permitting the other world to rise into consciousness.

Yours sincerely,

Aldous Huxley

Huxleys letter can help unpack the intended mind-manifesting etymology of Osmonds new term psychedelic. Huxley saw the biological function of the brain as a device engaged in a continuous process of elimination and inhibition to sustain the normal self of everyday waking experience to maximize adaptive fit. Huxleys choice metaphor for visualizing this was the cerebral reducing valve, Figure 3.

FIGURE 3

Aldous Huxley’s “cerebral reducing valve.” On the ‘inlet’ (right) side of the cerebral reducing valve is a vast ocean of all possible perceptual, emotional, and cognitive experiences. On the ‘outlet’ (left) side is our moment-to-moment stream of experience in normal waking life. Mechanisms inside the valve ‘reduce’ the character and contents of experience, ‘canalizing’ the ocean of possible experience into a more limited stream of waking consciousness aimed at maximum biological utility.

What I have called the cerebral reducing valve is a normal brain function that limits our mental processes to an awareness, most of the time, of what is biologically useful. Huxley argued that this normal brain function emerges developmentally during the course of psychological maturity, so for a period during childhood, before the cerebral reducing valve has fully developed, there is this capacity to live in a kind of visionary world. Once the valve is fully developed, however, normal waking life becomes restricted to a world fabricated by our everyday, biologically useful and socially conditioned perceptions, thoughts and feelings.

Huxley borrowed the core idea from 19th-century filtration theory accounts of various mental phenomena: According to filtration theorists, consciousness is ordinarily kept narrow by biological and psychological selection processes that exclude a great deal of subconscious material. Filtration theorists include founding figures of psychopharmacology, psychology, and parapsychology, along with early 20th-century philosophers Bergson. Bergson applied his own filtration framework to drug effects in his brief response to James, glowing descriptions of what it is like to inhale nitrous oxide. James peculiar state of mind, explained Bergson, should be thought of as a latent potential of the brain/mind, which nitrous oxide simply

brought about materially, by an inhibition of what inhibited it, by the removing of an obstacle; and this effect was the wholly negative one produced by the drug. Huxley picked up Bergsons line of thinking and eventually convinced Osmond that it was important to reflect this principle in scientific descriptions of the effects of LSD and mescaline. Smythies also subscribed to this idea, stating that

mescaline may be supposed to inhibit that function in the brain which specifically inhibits the mescaline phenomena from developing in the sensory fields.

Thus, Osmonds proposed name-change, psychedelic, was intended to capture the spirit of filtration theory. In this new descriptive model, psyche (mind) delic (manifesting) drugs manifest the mind by inhibiting certain brain processes which normally maintain their own inhibitory constraints on our perceptions, emotions, thoughts, and sense of self. Osmond and Huxley both found this principle highly applicable to their own direct first-person knowledge of what it is like to experience the effects of mescaline and LSD, the expanded range of feelings, intensification of perceptual stimuli, vivid vision-like mental imagery, unusual thoughts, and expanding (or dissolving) sense of self and identity.

Osmond argued that his

mind-manifesting description had further theoretical virtues that could address the conceptual challenges of model psychoses theory and improve our understanding of (1) the diverse range of psychedelic effects, (2) their relationship to psychotic symptoms, and (3) their role in psychedelic-assisted therapies. First, the pharmacological disruption of hypothetical inhibitory brain mechanisms that normally attenuate internal and external stimuli suggested that the kinds of effects produced by the drug would depend on the kinds of stimuli in the system, which is consistent with the diverse range of effects on multiple perceptual modalities, emotional experience, and cognition.

Second, the brains selective filtration mechanisms, while evolutionarily adaptive and biologically useful, could develop pathological characteristics in two fundamentally distinct ways. First, a chronically overactive filter limits too much of the mind, causing a rigid, dull, neurotic life in which mental contents become overly restricted to those enumerated in the Sears-Roebuck catalog which constitutes the conventionally

real world. Second, a chronically underactive or

leaky filter places too few constraints on the mind and allows too much

Mind at Large to enter conscious awareness, potentially resulting in perceptual instability, cognitive confusion, or hallucination. This picture helped Huxley and Osmond understand the relationship between psychedelic phenomena and psychotic phenomena: temporarily opening the cerebral reducing valve with psychedelics could produce mental phenomena that resembled symptoms of chronic natural psychoses precisely because both were the result of (acute or chronic) reductions in brain filtration mechanisms.

Third and finally, filtration theory addressed the paradoxical

hair of the dog issue, e.g. why drugs that

mimic psychoses can aid psychotherapy, which, as described in the previous section, was a conceptual challenge for model psychoses theory. The solution to the paradox was in the filtration theory idea that psychedelic drugs temporarily

disable brain filtration mechanisms, which could allow patients and therapists to work outside of the patients everyday (pathological) inhibitory mechanisms. Thus, filtration theory offered a way to understand psychedelic effects that was consistent with both their psychotomimetic properties and their therapeutic utility.

Osmond and Huxley argued that filtration theory concepts were fully consistent with the subjective phenomenology, psychotomimetic capability, and therapeutic efficacy of psychedelic drugs. However, it remains unclear exactly what it is that the brain is filtering and consequently what it is that emerges when the filter is pharmacologically perturbed by a psychedelic drug. According to Huxley, LSD and mescaline inhibit the function of the normal self and its ordinary brain activity, thus permitting the

other world to rise into consciousness. Huxley spoke of the brain as a device that filters the world and when the filter is removed we experience

more of reality. Osmonds

mind-manifesting (psyche) (delic) name, by contrast, suggests that these drugs permit latent aspects of mind to rise into conscious awareness. So which is it? Do psychedelic drugs manifest latent aspects of mind or of world? How we answer this question will crucially determine our ontological and epistemological conclusions regarding the nature of psychedelic experience. Huxley and Osmond did not make this clear. Huxley seems to favor the position that psychedelic experience reveals a wider ontological reality and grants epistemic access to greater truth. Osmonds view, on which these drugs reveal normally hidden aspects of mind, seems less radical, more compatible with materialist science, and less epistemically and ontologically committed. Still, if mind provides us with access to world, then lifting restrictions on mind could in principle expand our access to world. This important point resurfaces in section

Predictive Processing below.

Psychoanalytic Theory

Freud developed an elaborate theoretical account of mental phenomena which, like filtration theory, placed great emphasis on inhibition mechanisms in the nervous system. Freud divided the psyche into two fundamentally distinct modes of activity: the primary process and the secondary process. In the primary process, the exchange of

neuronal energy is

freely mobile and its psychological dynamics are characterized by disorder, vagueness, conceptual paradox, symbolic imagery, intense emotions, and animistic thinking. In the secondary process, by contrast, the exchange of neuronal energy is

bound and its psychological dynamics are characterized by order, precision, conceptual consistency, controlled emotions, and rational thinking. Freud hypothesized that the secondary process is maintained by an organizing neural

mass called the ego which

contains and exerts control over the primary process by binding primary process activity into its own pattern of activity. Freud hypothesized that secondary process neural organization, sustained by the ego, is required for certain aspects of perceptual processing, directed attention, reality-testing, sense of linear time, and higher cognitive processes. When Freuds ego is suppressed, such as during dream sleep, wider worlds of experience can emerge, but secondary process functions are lost. The secondary process and its supporting neural organizing pattern, the ego, emerges during ontogenetic development and solidifies with adult maturity:

A unity comparable to the ego cannot exist from the start; the ego has to be developed. Furthermore, pathological characteristics can emerge when Freuds ego restricts either too much or too little of the primary process.

Freud himself was apparently uninterested in psychedelic drugs and instead emphasized dreams as

the royal road to a knowledge of the unconscious activities of the mind. Nonetheless, psychedelic drugs produce dreamlike visions and modes of cognition that feature symbolic imagery, conceptual paradox, and other hallmark characteristics of the primary process. How did other psychoanalytic theorists describe psychedelic drug effects? The core idea is that psychedelic drugs interfere with the structural integrity of the ego and thereby reduce its ability to suppress the primary process and support the secondary process. This

frees the primary process which then spills into conscious awareness, resulting in perceptual instability, wildly vivid imagination, emotional intensity, conceptual paradox, and loss of usual self-boundaries. Due in part to the close resemblance between psychedelic effects and primary process phenomena, psychoanalytic theory became the framework of choice during the mid 20th-century boom in psychedelic therapy. Psychedelic ego effects, which range from a subtle loosening to a complete dissolution of ego boundaries, were found to be great tools in psychotherapy because of their capacity to perturb ego and allow primary process phenomena to emerge.

But how do psychedelic drugs disrupt the structure of the ego? Freud hypothesized that the organizational structure of ego rests upon a basic perceptual schematic of the body and its surrounding environment. Perceptual signals are continuously

bound and integrated into the somatic boundaries of the ego. Savage speculated that the LSDs perceptual effects and ego effects are tightly linked.

LSD acts by altering perception. Continuous correct perception is necessary to maintain ego feeling and ego boundaries. Perception determines our ego boundaries. Disturbances in perception caused by LSD make it impossible for the ego to integrate the evidence of the senses and to coordinate its activities... Klee expanded Savages insights into a set of hypotheses aimed at elucidating the neurobiological mechanisms of a Freudian

stimulus barrier and its dissolution under LSD:

Such barriers would presumably consist of processes limiting the spread of excitation between different functional areas of the brain. The indications are that LSD, in some manner, breaks down these stimulus barriers of which Freud spoke. Nor is this merely a figure of speech. There is some reason to suspect that integrative mechanisms within the central nervous system (CNS) which handle inflowing stimuli are no longer able to limit the spread of excitation in the usual ways. We might speculate that LSD allows greater energy exchanges between certain systems than normally occurs, without necessarily raising the general level of excitation of all cortical and subcortical structures.

Freud hypothesized that ego is sustained by a delicate balance of

neuronal energy which critically depends on integrative mechanisms to process inflowing sensory stimuli and to

bind neural excitation into functional structures within the brain. Psychedelic drugs, according to Savage and Klee, perturb integrative mechanisms that normally bind and shape endogenous and exogenous excitation into the structure of the ego. As we will see below, Klee?s ideas strongly anticipate many neurophysiological findings and theoretical themes from 21st-century psychedelic science.

Summary

From the above analysis of first-wave and second-wave theories I have identified four recurring theoretical features which could potentially serve as unifying principles. One feature is the hypothesis that psychedelic drugs inhibit a core brain mechanism that normally functions to

reduce or

filter or

constrain mental phenomena into an evolutionarily adaptive container. A second feature is the hypothesis that this core brain mechanism can behave pathologically, either in the direction of too much, or too little, constraint imposed on perception, emotion, cognition, and sense of self. A third feature is the hypothesis that psychedelic phenomena and symptoms of chronic psychoses share descriptive elements because they both involve situations of relatively unconstrained mental processes. A fourth feature is the hypothesis that psychedelic drugs have therapeutic utility via their ability to temporarily inhibit these inhibitory brain mechanisms. But how are these inhibitory mechanisms realized in the brain?

Neuropharmacology and Neurophysiological Correlates of Psychedelic Drug Effects

Klee recognized that his above hypotheses, inspired by psychoanalytic theory and LSD effects, required neurophysiological evidence.

As far as I am aware, however, adequate neurophysiological evidence is lacking. The long awaited millennium in which biochemical, physiological, and psychological processes can be freely correlated still seems a great distance off. What clues have recent investigations uncovered?

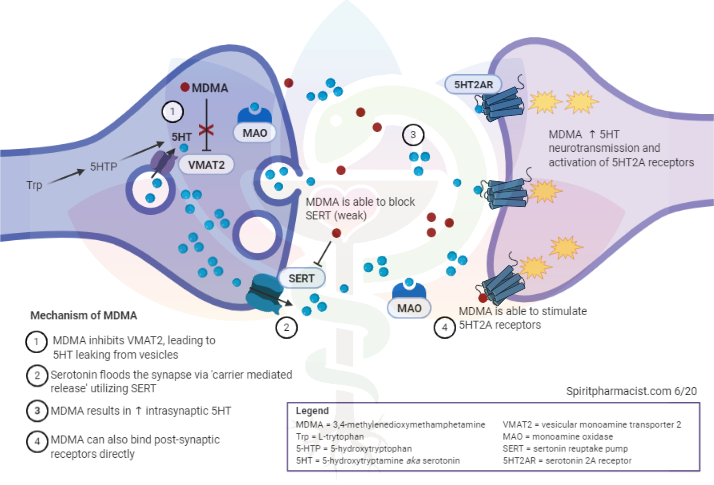

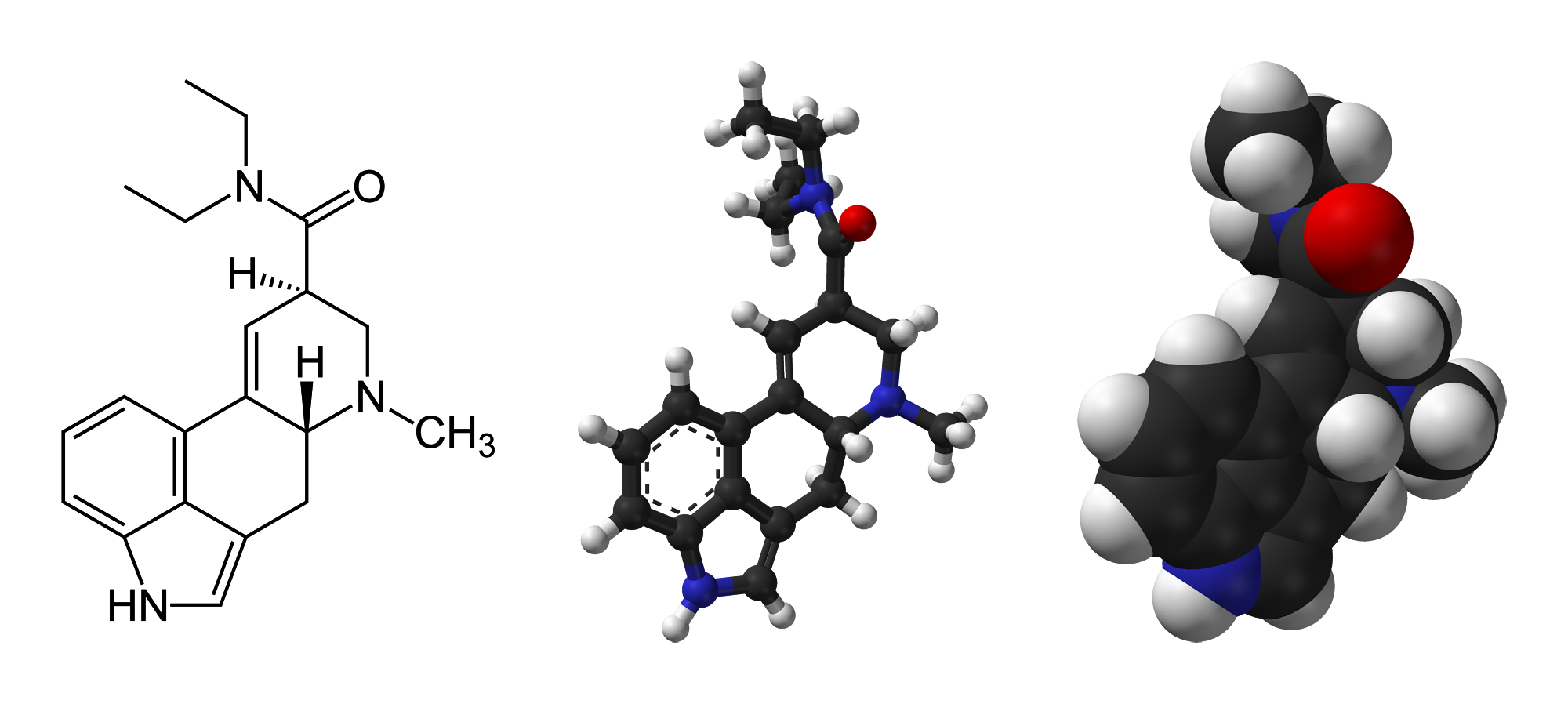

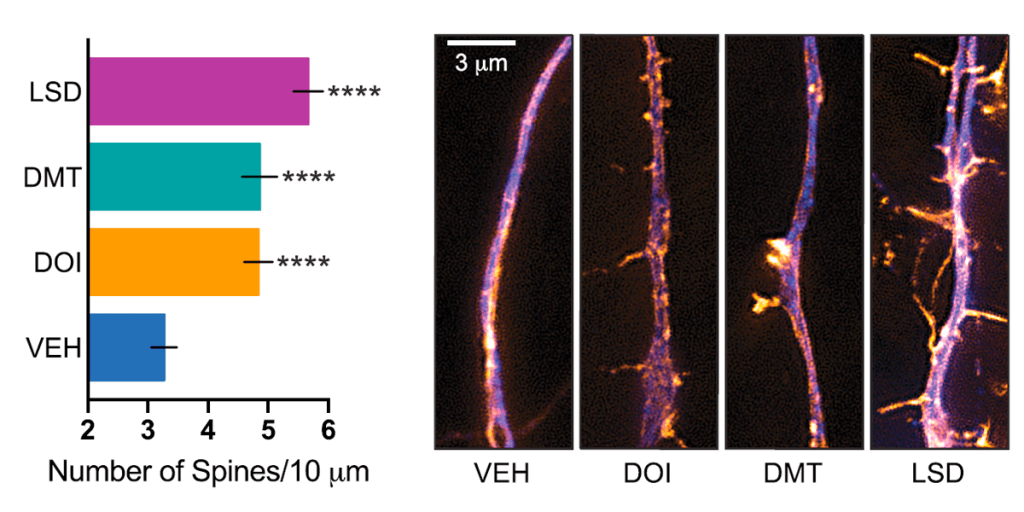

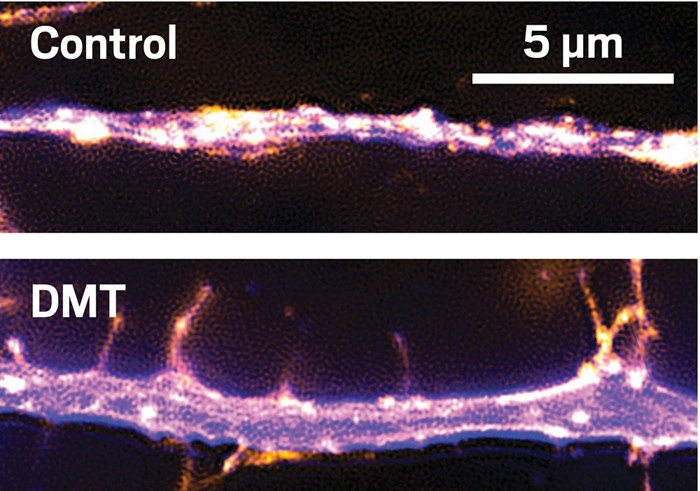

A psychedelic drug molecule impacts a neuron by binding to and altering the conformation of receptors on the surface of the neuron. The receptor interaction most implicated in producing classic psychedelic drug effects is agonist or partial agonist activity at serotonin (5-HT) receptor type 2A (5-HT2A). A molecules propensity for 5-HT2A affinity and agonist activity predicts its potential for (and potency of) subjective psychedelic effects. When a psychedelic drugs 5-HT2A agonist activity is intentionally blocked using 5-HT2A antagonist drugs, e.g. ketanserin, the subjective effects are blocked or attenuated in humans under psilocybin, LSD, and ayahuasca. Importantly, while the above evidence makes it clear that 5-HT2A activation is a necessary mediator of the hallmark subjective effects of classic psychedelic drugs, this does not entail that 5-HT2A activation is the sole neurochemical cause of all subjective effects. For example, 5-HT2A activation might trigger neurochemical modulations

downstream, e.g. changes in glutamate transmission, which could also play causal roles in producing psychedelic effects. Moreover, most psychedelic drug molecules activate other receptors in addition to 5-HT2A, and these activations may importantly contribute to the overall profile of subjective effects even if 5-HT2A activation is required for their effects to occur.

How does psychedelic drug-induced 5-HT2A receptor agonism change the behavior of the host neuron? Generally, 5-HT2A activation has a depolarizing effect on the neuron, making it more excitable (more likely to fire). Importantly, this does not necessarily entail that 5-HT2A activation will have an overall excitatory effect throughout the brain, particularly if the excitation occurs in inhibitory neurons. This important consideration (captured by the adage

one neurons excitation is another neurons inhibition) should be kept in mind when tracing causal links in the pharmaco-neurophysiology of psychedelic drug effects.

In mammalian brains, neurons tend to

fire together in synchronized rhythms known as temporal oscillations (brain waves). MEG and EEG equipment measure the electromagnetic disturbances produced by the temporal oscillations of large neural populations and these measurements can be quantified according to their amplitude (power) and frequency (timing). Specific combinations of frequency and amplitude can be correlated with distinct brain states, including waking

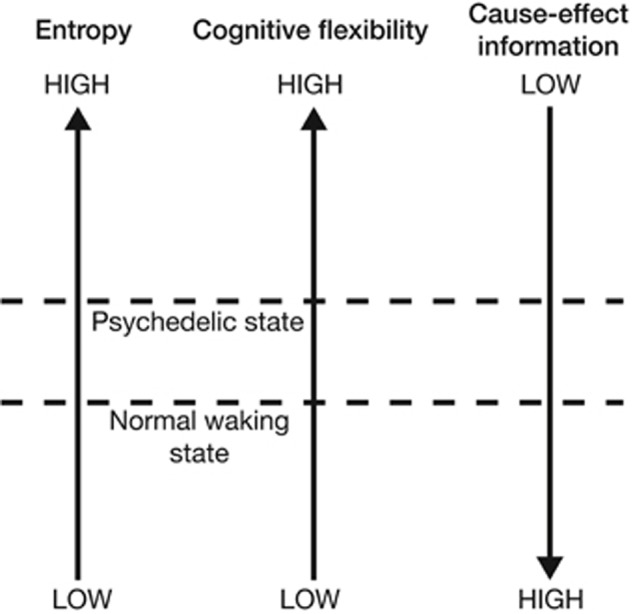

resting state, various attentional tasks, anesthesia, REM sleep, and deep sleep. In what ways do temporal oscillations change under psychedelic drugs? MEG and EEG studies consistently show reductions in oscillatory power across a broad frequency range under ayahuasca, psilocybin, and LSD. Reductions in the power of alpha-band oscillations, localized mainly to parietal and occipital cortex, have been correlated with intensity of subjective visual effects, e.g.

I saw geometric patterns, or,

My imagination was extremely vivid, under psilocybin and ayahuasca. Under LSD, reductions in alpha power still correlated with intensity of subjective visual effects but associated alpha reductions were more widely distributed throughout the brain. Furthermore, ego-dissolution effects and mystical-type experiences, e.g.

I experienced a disintegration of my self, or ego, or,

The experience had a supernatural quality, have been correlated with reductions in alpha power localized to anterior and posterior cingulate cortices and the parahippocampal regions under psilocybin and throughout the brain under LSD.

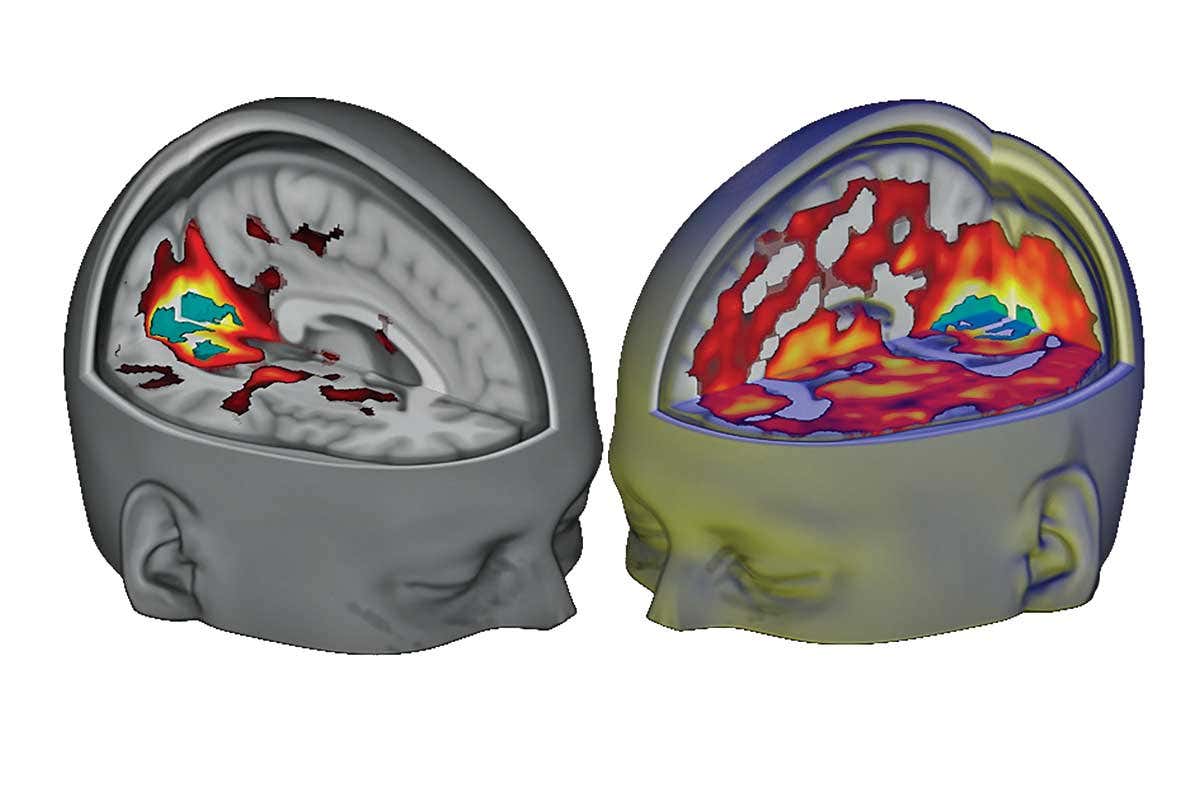

The concept of functional connectivity rests upon fMRI brain imaging observations that reveal temporal correlations of activity occurring in spatially remote regions of the brain which form highly structured patterns (brain networks). Imaging of brains during perceptual or cognitive task performance reveals patterns of functional connectivity known as functional networks, e.g. control network, dorsal attention network, ventral attention network, visual network, auditory network, and so on. Imaging brains in taskless resting conditions reveals resting-state functional connectivity (RSFC) and structured patterns of RSFC known as resting state networks. One particular RSN, the default mode network, increases activity in the absence of tasks and decreases activity during task performance. DMN activity is strong during internally directed cognition and a variety of other

metacognitive functions. DMN activation in normal waking states exhibits

inverse coupling or anticorrelation with the activation of task-positive functional networks, meaning that DMN and functional networks are often mutually exclusive; one deactivates as the other activates and vice versa.

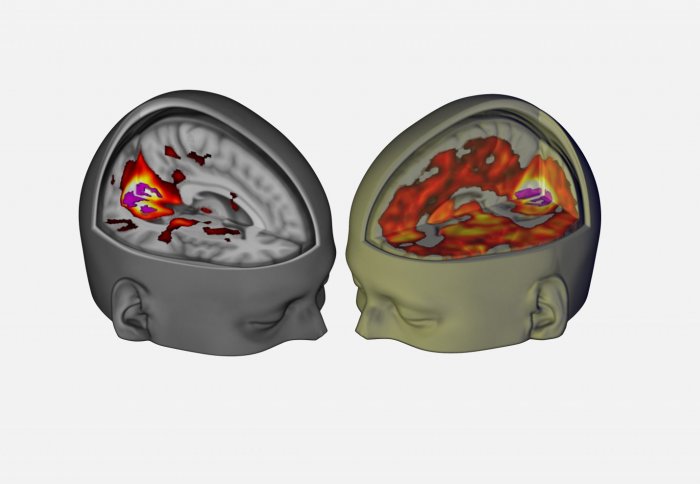

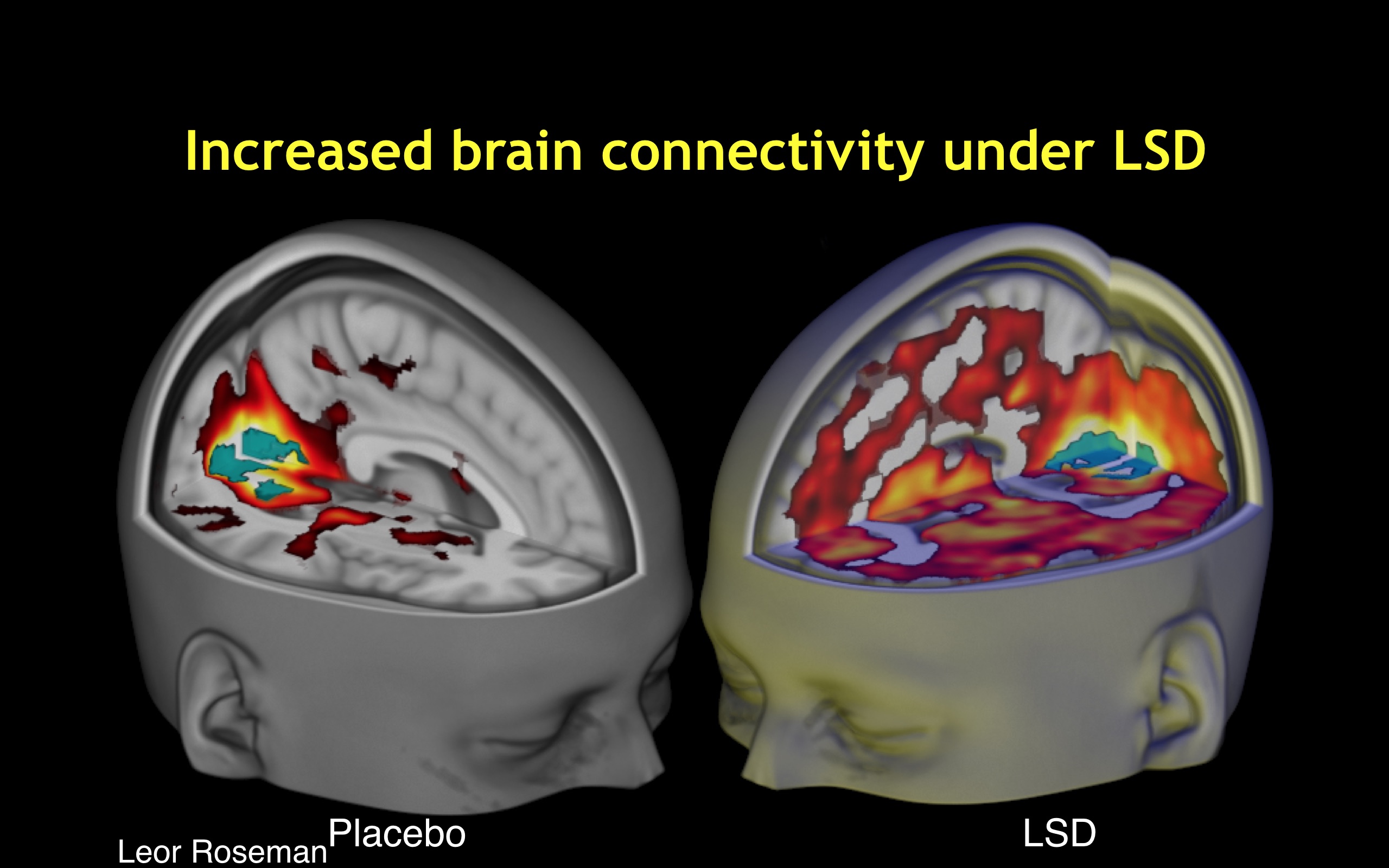

In what ways does brain network connectivity change under psychedelic drugs? First, functional connectivity between key

hub areas, mPFC and PCC, is reduced. Second, the

strength or oscillatory power of the DMN is weakened and its intrinsic functional connectivity becomes disintegrated as its component nodes become decoupled under psilocybin, ayahuasca, and LSD. Third, brain networks that normally show anticorrelation become active simultaneously under psychedelic drugs. This situation, which can be described as increased between-network functional connectivity, occurs under psilocybin, ayahuasca and especially LSD. Fourth and finally, the overall repertoire of explored functional connectivity motifs is substantially expanded and its informational dynamics become more diverse and entropic compared with normal waking states. Notably, the magnitude of occurrence of the above four neurodynamical themes correlates with subjective intensity of psychedelic effects during the drug session. Furthermore, visual cortex is activated during eyes-closed psychedelic visual imagery and under LSD, the early visual system behaves

as if it were receiving spatially localized visual information, as V1-V3 RSFC is activated in a retinotopic fashion.

Taken together, the recently discovered neurophysiological correlates of subjective psychedelic effects present an important puzzle for 21st-century neuroscience. A key clue is that 5-HT2A receptor agonism leads to desynchronization of oscillatory activity, disintegration of intrinsic integrity in the DMN and related brain networks, and an overall brain dynamic characterized by increased between-network global functional connectivity, expanded signal diversity, and a larger repertoire of structured neurophysiological activation patterns. Crucially, these characteristic traits of psychedelic brain activity have been correlated with the phenomenological dynamics and intensity of subjective psychedelic effects.

21st-Century Theories of Psychedelic Drug Effects

How should we understand the growing body of clues emerging from investigations into the neurodynamics of psychedelic effects? What are the principles that link these thematic patterns of psychedelic brain activity (or inactivity) to their associated phenomenological effects? Recent theoretical efforts to understand psychedelic drug effects have taken advantage of existing frameworks from cognitive neuroscience designed to track the key neurodynamic principles of human perception, emotion, cognition, and consciousness. The overall picture that emerges from these efforts shares core principles with filtration and psychoanalytic accounts of the late 19th and early 20th century. Briefly, normal waking perception and cognition are hypothesized to rest upon brain mechanisms which serve to suppress entropy and uncertainty by placing various constraints on perceptual and cognitive systems. In a

selecting and

limiting fashion, neurobiological constraint mechanisms support stability and predictability in the contents of conscious awareness in the interest of adaptability, survival, and evolutionary fitness. The core hypothesis of recent cognitive neuroscience theories of psychedelic effects is that these drugs interfere with the integrity of neurobiological information-processing constraint mechanisms. The net effect of this is that the range of possibilities in perception, emotion, and cognition is dose-dependently expanded. From this core hypothesis, cognitive neuroscience frameworks are utilized to describe and operationalize the quantitative neurodynamics of key psychedelic phenomena; namely, the diversity of effects across many mental processes, the elements in common with symptoms of psychoses, and the way in which temporarily removing neurobiological

constraints is therapeutically beneficial.

This section is organized according to the broad theoretical frameworks informing recent theoretical neuroscience of psychedelic effects: entropic brain theory, integrated information theory, and predictive processing.

Entropic Brain Theory

Entropic Brain Theory links the phenomenology and neurophysiology of psychedelic effects by characterizing both in terms of the quantitative notions of entropy and uncertainty. Entropy is a quantitative index of a systems (physical) disorder or randomness which can simultaneously describe its (informational) uncertainty. EBT proposes that

the quality of any conscious state depends on the systems entropy measured via key parameters of brain function. Their hypothesis states that hallmark psychedelic effects, e.g. perceptual destabilization, cognitive flexibility, ego dissolution) can be mapped directly onto elevated levels of entropy/uncertainty measured in brain activity, e.g. widened repertoire of functional connectivity patterns, reduced anticorrelation of brain networks, and desynchronization of RSN activity. More specifically, EBT characterizes the difference between psychedelic states and normal waking states in terms of how the underlying brain dynamics are positioned on a scale between the two extremes of order and disorder, a concept known as

self-organized criticality. A system with high order (low entropy) exhibits dynamics that resemble

petrification and are relatively inflexible but more stable, while a system with low order (high entropy) exhibits dynamics that resemble

formlessness and are more flexible but less stable. The notion of

criticality describes the transition zone in which the brain remains poised between order and disorder. Physical systems at criticality exhibit increased transient

metastable states, increased sensitivity to perturbation, and increased propensity for cascading

avalanches of metastable activity. Importantly, EBT points out that these characteristics are consistent with psychedelic phenomenology, e.g. hypersensitivity to external stimuli, broadened range of experiences, or rapidly shifting perceptual and mental contents. Furthermore, EBT uses the notion of criticality to characterize the difference between psychedelic states and normal waking states as it describes cognition in adult modern humans as near critical but sub-critical, meaning that its dynamics are poised in a position between the two extremes of formlessness and petrification where there is an optimal balance between order and flexibility. EBT hypothesizes that

psychedelic drugs interfere with entropy-suppression brain mechanisms which normally sustain sub-critical brain dynamics, thus bringing the brain, closer to criticality in the psychedelic state.

Entropic Brain Theory further characterizes psychedelic neurodynamics using a neo-psychoanalytic framework proposed in an earlier paper by Carhart-Harris and Friston where they recast some central Freudian ideas in a mechanistic and biologically informed fashion. Freuds primary process (renamed

primary consciousness) is hypothesized to be a high-entropy brain dynamic which operates at criticality, while Freuds secondary process (renamed

secondary consciousness) is hypothesized to involve a lower-entropy brain state which sustains a sub-critical dynamic via a key neurobiological entropy-suppression mechanism, the ego, which exerts an organizing influence in order to constrain the criticality-like dynamic of primary consciousness. EBT argues that these ego functions have a signature neural footprint; namely, the DMNs intrinsic functional connectivity and DMN coupling of medial temporal lobes (MTLs) in particular. Furthermore, EBT argues that DMN/ego develops ontogenetically in adult humans and plays an adaptive role in which it sustains secondary consciousness and associated metacognitive abilities along with an

integrated sense of self.

Importantly, this hypothesis maps onto the subjective phenomenology of psychedelic effects, particularly ego dissolution. As psychedelics weaken the oscillatory power and intrinsic functional connectivity of the DMN, the normally constrained activity of subordinate DMN nodes, MTLs in particular, becomes

freely mobile, allowing the emergence of more uncertain (higher entropy) primary consciousness. This view, based on Freudian metapsychology, is also consistent with filtration accounts, like those of Bergson and Huxley, who hypothesized that psychedelic drug effects are the result of a pharmacological inhibition of inhibitory brain mechanisms. EBT recasts these theoretical features using the quantitative terms of physical entropy and informational uncertainty as measured via

the repertoire of functional connectivity motifs that form and fragment across time. In normal waking states, the DMN constrains the activity of its cortical and subcortical nodes and prohibits simultaneous co-activation with TPNs. By interfering with DMN integration, psychedelics permit a larger repertoire of brain activity, a wider variety of explored functional connectivity motifs, co-activation of normally mutually exclusive brain networks, increased levels of between-network

functional connectivity, and an overall more diverse set of neural interactions.

Carhart-Harris et al. point out a number of implications of EBT. First, they map the feelings of

uncertainty that often accompany psychedelic effects onto the fact that a more entropic brain dynamic is the information-theoretic equivalent to a more

uncertain brain dynamic. Thus, according to the entropic brain hypothesis, just as normally robust principles about the brain lose definition in primary states, so confidence is lost in

how the world is and

who one is as a personality.

Second, like Huxleys cerebral reducing valve and Freuds ego, EBT argues that the DMNs organizational stronghold over brain activity can be both an evolutionary advantage and a source of pathology. It is argued that this entropy-suppressing function of the human brain serves to promote realism, foresight, careful reflection and an ability to recognize and overcome wishful and paranoid fantasies. Equally however, it could be seen as exerting a limiting or narrowing influence on consciousness. Carhart-Harris et al. point out that neuroimaging studies have implicated increased DMN activity and RSFC with various aspects of depressive rumination, trait neuroticism, and depression. The suggestion is that increased DMN activity and connectivity in mild depression promotes concerted introspection and an especially diligent style of reality-testing. However, what may be gained in mild depression (i.e., accurate reality testing) may be offset by a reciprocal decrease in flexible or divergent thinking (and positive mood).

Third, consistent with both psychoanalytic and filtration theory, is the notion that psychedelic drugs capacity to temporarily weaken, collapse, or disintegrate the normal ego/DMN stronghold underpins their therapeutic utility. Specifically, it is proposed that psychedelics work by dismantling reinforced patterns of negative thought and behavior by breaking down the stable spatiotemporal patterns of brain activity upon which they rest.

Fourth and finally, EBT sheds light on the shared descriptive elements between psychedelic effects and psychotic symptoms by characterizing both in terms of elevated levels of entropy and uncertainty in brain activity which lead to a

regression into primary consciousness. The collapse of the organizing effect of DMN coupling and anticorrelation patterns, according to EBT, point to system-level mechanics of the psychedelic state as an exemplar of a regressive style of cognition that can also be observed in REM sleep and early psychosis.

Thus, EBT formulates all four of the theoretical features identified in filtration and psychoanalytic accounts, but does so using 21st-century empirical data plugged into the quantitative concepts of entropy, uncertainty, criticality, and functional connectivity. EBT hints at possible ways to close the gaps in understanding by offering quantitative concepts that link phenomenology to brain activity and pathogenesis to therapeutic mechanisms.

Integrated Information Theory

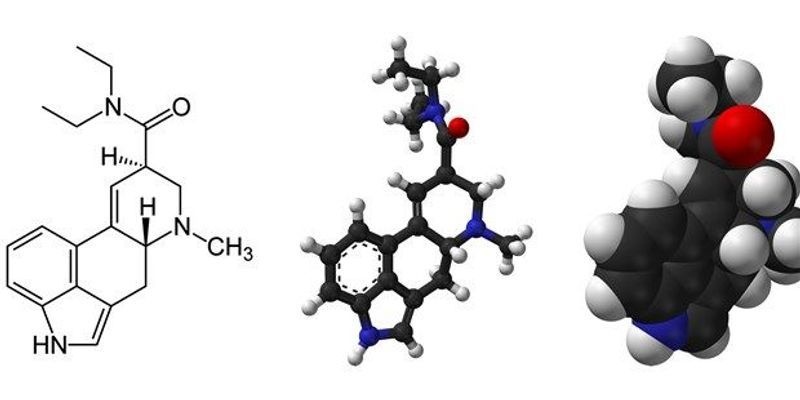

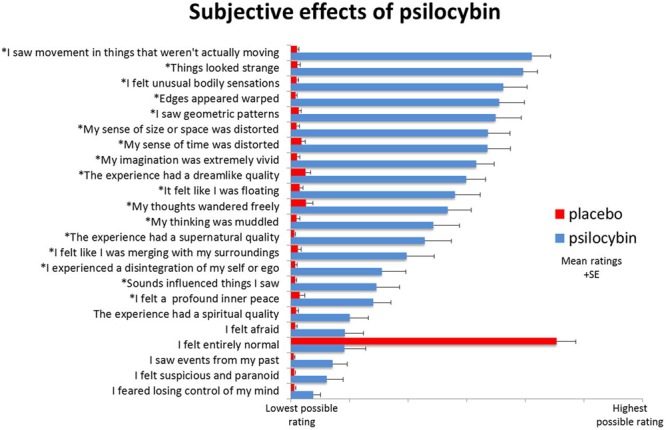

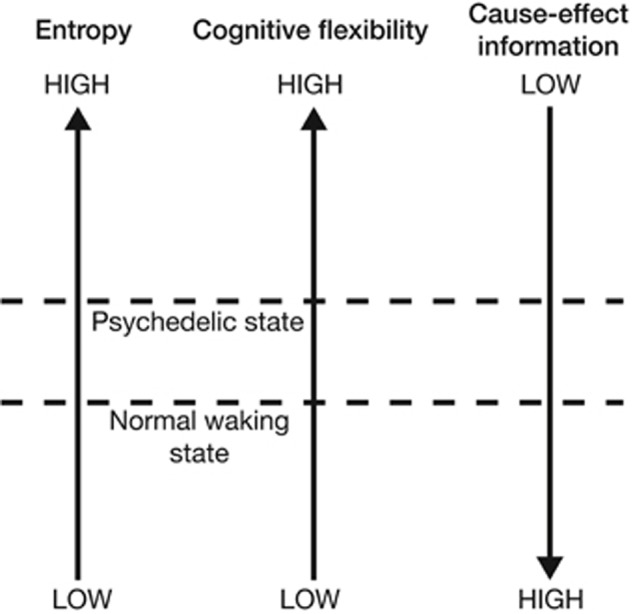

Integrated Information Theory (IIT) is a general theoretical framework which describes the relationship between consciousness and its physical substrates. While EBT is already loosely consistent with the core principles of IIT, Gallimore demonstrates how EBTs hypotheses can be operationalized using the technical concepts of the IIT framework. Using EBT and recent neuroimaging data as a foundation, Gallimore develops an IIT-based model of psychedelic effects. Consistent with EBT, this IIT-based model describes the brains continual challenge of minimizing entropy while retaining flexibility. Gallimore formally restates this problem using IIT parameters: brains attempt to optimize the give-and-take dynamic between cause-effect information and cognitive flexibility. In IIT, a (neural) system generates cause-effect information when the mechanisms which make up its current state constrain the set of states which could casually precede or follow the current state. In other words, each mechanistic state of the brain: (1) limits the set of past states which could have causally given rise to it, and (2) limits the set of future states which can causally follow from it. Thus, each current state of the mechanisms within a neural system (or subsystem) has an associated cause-effect repertoire which specifies a certain amount of cause-effect information as a function of how stringently it constrains the unconstrained state repertoire of all possible system states. Increasing the entropy within a cause-effect repertoire will in effect constrain the system less stringently as the causal possibilities are expanded in both temporal directions as the system moves closer to its unconstrained repertoire of all possible states. Moreover, increasing the entropy within a cause-effect repertoire equivalently increases the uncertainty associated with its past (and future) causal interactions. Using this IIT-based framework, Gallimore argues that, compared with normal waking states, psychedelic brain states exhibit higher entropy, higher cognitive flexibility, but lower cause-effect information, Figure 4.

FIGURE 4

“Increasing neural entropy elevates cognitive flexibility at the expense of a decrease in the cause-effect information specified by individual mechanisms.”

Neuroimaging data suggests that human brains exhibit a larger overall repertoire of neurophysiological states under psychedelic drugs, exploring a greater diversity of states in a more random fashion. For example, in normal waking states, DMN activity

rules out the activity of TPNs, and vice versa, due to their relatively strict anticorrelation patterns. Brain network anticorrelation generates cause-effect information because it places constraints on the possible causal interactions within and between brain mechanisms; for example, DMN-TPN anticorrelation patterns

rule out the DMN activity in the presence of activated TPNs. However, psychedelic drugs

dissolve DMN-TPN (and other) network anticorrelation patterns, which permits simultaneous activation of brain networks which are normally mutually exclusive. The cause-effect repertoire of brain mechanisms thus shifts closer to the unconstrained repertoire of all possible past and future states. This has the effect of increasing the probability of certain states from zero or, at least, from a very low probability. Therefore the subjective contents perception and cognition become more diverse, more unusual, and less predictable. This increases flexibility but decreases precision and control as the subjective boundaries which normally demarcate distinct cognitive concepts and perceptual objects dissolve. Gallimore leverages IIT in an attempt unify these phenomena under a formalized framework.

However, as Gallimore notes,

this model does not explain how neural entropy is increased by (psychedelic drugs), but predicts consequences of the entropy increase revealed by functional imaging data. How do psychedelic drugs increase neural entropy?

Predictive Processing

The first modern brain imaging measurements in humans under psilocybin yielded somewhat unexpected results: reductions in oscillatory power (MEG) and cerebral blood flow (fMRI) correlated with the intensity of subjective psychedelic effects. In their discussion, the authors suggest that their findings, although surprising through the lens of commonly held beliefs about how brain activity maps to subjective phenomenology, may actually be consistent with a theory of brain function known as the free energy principle.

In one model of global brain function based on the free-energy principle, activity in deep-layer projection neurons encodes top-down inferences about the world. Speculatively, if deep-layer pyramidal cells were to become hyperexcitable during the psychedelic state, information processing would be biased in the direction of inference, such that implicit models of the world become spontaneously manifest, intruding into consciousness without prior invitation from sensory data. This could explain many of the subjective effects of psychedelics.

What is FEP? In this view, the brain is an inference machine that actively predicts and explains its sensations. Central to this hypothesis is a probabilistic model that can generate predictions, against which sensory samples are tested to update beliefs about their causes. FEP is a formulation of a broader conceptual framework emerging in cognitive neuroscience known as predictive processing. PP has links to bayesian brain hypothesis, predictive coding, and earlier theories of perception and cognition dating back to Helmholtz, who was inspired by Kant. At the turn of the 21st century, the ideas of Helmholtz catalyzed innovations in machine learning, new understandings of cortical organization, and theories of how perception works.

PP subsumes key elements from these efforts to describe a universal principle of brain function captured by the idea of prediction error minimization. What does it mean to say that the brain works to minimize its own prediction error? Higher-level areas of the nervous system (i.e., higher-order cortical structures) generate top-down synaptic

predictions aimed at matching the expected bottom-up synaptic activity at lower-level areas, all the way down to

input activity at sense organs. Top-down signals encode a kind of

best guess about the most likely (hidden) causes of bodily sensations. In this multi-level hierarchical cascade of neural activity, high-level areas attempt to

explain the states of levels below via synaptic attempts to inhibit lower-level activity, high-level areas tell lower levels to

shut up. But lower levels will not

shut up until they receive top-down feedback (inference) signals that adequately fit (explain) the bottom-up (evidence) signals. Mismatches between synaptic

expectation and synaptic

evidence generate prediction error signals which

carry the news by propagating the

surprise upward to be

explained away by yet higher levels of hierarchical cortical processing anatomy. This recurrent neural processing scheme approximates (empirical) Bayesian inference as the brain continually maps measured bodily effects to different sets of possible causes and attempts to select the set of possible causes that can best

explain away the measured bodily effects.

Crucially, the sets of possible causes must be narrowed in order for the system to settle on an explanation). Prior constraints which allow the system to narrow the hypothesis space are known as

inductive biases or priors. Efforts in Bayesian statistics and machine learning have demonstrated that improvements in inductive capabilities occur when priors are linked in a multi-level hierarchy, with not just a single level of hypotheses to explain the data, but multiple levels: hypothesis spaces of hypothesis spaces, with priors on priors. Certain priors in the hierarchy, known as

hyperpriors or

overhypotheses are more abstract and allow the system to

rule out large swaths of possibilities, drastically narrowing the hypothesis space, making explanation more tractable. For example, the brute constraints of space and time act as hyperpriors, e.g. prior knowledge that there is only one object (one cause of sensory input) in one place, at a given scale, at a given moment, or the fact that we can only perform one action at a time, choosing the left turn or the right but never both at once.

Thus, PP states that brains are neural generative models built from linked hierarchies of priors where higher levels continuously attempt to

guess and explain activity at lower levels. The entire process can be characterized as the agents attempt to optimize its own internal model of the sensorium (and the world) over multiple spatial and temporal scales. Interestingly, PP holds that our perceptions of external objects recruit the same synaptic pathways that enable our capacity for mental imagery, dreaming, and hallucination. The brains ability to

simulate its own

virtual reality using internal (generative) models of the worlds causal structure is thus crucial to its ability to perceive the external world. A fruitful way of looking at the human brain, therefore, is as a system which, even in ordinary waking states, constantly hallucinates at the world, as a system that constantly lets its internal autonomous simulational dynamics collide with the ongoing flow of sensory input, vigorously dreaming at the world and thereby generating the content of phenomenal experience.

How do psychedelic molecules perturb predictive processing? If normal perception is a kind of

controlled hallucination where top-down simulation is constrained by bottom-up sensory input colliding with priors upon priors, then, as the above quotation from Muthukumaraswamy et al. suggests, psychedelic drugs essentially cause perception to be less controlled hallucination. The idea is that psychedelic drugs perturb the (learned and innate) prior constraints on internal generative models. Via their 5-HT2A agonism, psychedelic drugs cause hyperexcitation in layer V pyramidal neurons, which might cause endogenous simulations to

run wild so that awareness becomes more imaginative, dreamlike, and hallucinatory. This hypothesis could in principle still be consistent with observed reductions in brain activity under psychedelics; recall from above that, in PP schemes, the higher-level areas

explain away lower-level excitation by suppressing it with top-down inhibitory signals. Here, explaining away just means countering excitatory bottom-up inputs to a prediction error neuron with inhibitory synaptic inputs that are driven by top-down predictions.

How does PP tie into filtration theories and psychoanalytic accounts? Carhart-Harris et al. link Huxley with Friston to interpret their initially surprising fMRI scans of humans under psilocybin. One objection to this linkage might be that Huxley often describes psychedelic opening of the cerebral reducing valve as revealing more of the world. At first glance this seems at odds with the above PP account of psychedelic effects, which describes psychedelic drugs causing rampant internal simulations of reality, not revealing more of the external world. However, this apparent tension might be resolved in light of active inference, a key principle of FEP. Active inference shows how internal models do not merely generate top-down (inference) signals but also shape the sampling and accumulation of bottom-up sensory (evidence) signals. In short, the agent will selectively sample the sensory inputs that it expects. This is known as active inference. An intuitive example of this process (when it is raised into consciousness) would be feeling our way in darkness: we anticipate what we might touch next and then try to confirm those expectations. The principle of active inference hints at a resolution to the apparent tensions between Osmonds

mind-manifesting model and Huxleys

world-manifesting model. Psychedelics manifest mind by perturbing prior constraints on internal generative models, thereby expanding the possibilities in our inner world of feelings, thoughts, and mental imagery. Importantly, this could also manifest normally ignored aspects of world by altering active inference, which would in effect expand the sampling of sensory data to include samples that are normally routinely

explained away. Potentially, this understanding goes some way in explaining the perception-hallucination continuum of psychedelic drug effects, as it shows how perceptual intensifications, on the one hand, and distortions and hallucinations, on the other hand, could both be caused by a synaptic disruption of hierarchically linked priors in internal generative models.

The brief speculative remark by Muthukumaraswamy et al. is not the only PP-based account of psychedelic drug effects. The PP framework describes a recurrent back-and-forth give-and-take between colliding top-down and bottom-up signals, where internal models serve to shape experience and experience serves to build internal models, so this leaves room for rival PP-based accounts that diverge regarding where exactly the psychedelic drug perturbs the system. For example, increased top-down activity could be the result of pharmacological hyperactivation of top-down synaptic transmission; yet equally plausible is the hypothesis that increased top-down activity is a compensatory response to pharmacological attenuations or distortions of bottom-up signal.

For example, Corlett et al. hypothesize that LSD hallucinations result from

noisy, unpredictable bottom-up signaling in the context of preserved and perhaps enhanced top-down processing. In contrast to the PP-based account outlined above, which focuses on changes to top-down signals, the strategy of Corlett et al. is to map various psychedelic effects to disturbances of top-down and/or bottom-up signals. The issue of what is primary and what is compensatory illustrates the vast possibilities in the hypothesis space of PP-based accounts.

While most PP-based accounts point to changes in top-down signaling, even within this hypothesis space there are contrasting conceptions of exactly how psychedelic molecules perturb top-down processing. Briefly, these differing hypotheses include: (1) hyperactivation or heavier weighting of top-down signaling, (2) reduced influence of signals from higher cortical areas, (3) interference with multisensory integration processes and PP-based binding of sensory signals, and (4) changes in the composition and level of detail specified by top-down signals.

Carhart-Harris and Friston argue that the Freudian conception of ego, with its organizing influence over the primary process, is consistent with PP descriptions of higher-level cortical structures predicting and suppressing the excitation in lower levels in the hierarchy (i.e., limbic regions). Freud hypothesized that the secondary process binds, integrates, and organizes the

lower and more chaotic neural activity of the primary process into the broader and more cohesive composite structure of the ego.

Carhart-Harris and Friston argue that when large-scale intrinsic networks become dis-integrated, the activity at lower levels can no longer be

explained away (suppressed) by certain higher-level systems, causing conscious awareness to take on hallmark characteristics of the primary process. In normal adult waking states, networks based in higher-level areas can successfully predict and explain (suppress and control) the activity of lower level areas.

In non-ordinary states, this function may be perturbed, e.g. in the case of hallucinogenic drugs, through actions at modulatory post-synaptic receptors), compromising the hierarchical organization and suppressive capacity of the intrinsic networks.

Similar PP-based theories of psychedelic ego dissolution have been proposed without invoking Freud. PP posits that the brain explains self-generated stimuli by attributing its causes to a coherent and persisting entity (i.e., the self), much like how it predicts and explains external stimuli by attributing their causes to coherent and persisting external objects. Letheby and Gerrans use the PP framework to recast the psychoanalysis-based theories of LSD ego effects proposed by Savage and Klee described in Section